Long Short Term Memory (LSTM) networks are a type of recurrent neural network that may learn order dependence in sequence prediction problems. The current RNN step uses the output from the previous step as its input. The LSTM was developed by Hochreiter and Schmidhuber. It addressed the problem of RNN long-term reliance, in which the RNN can predict words based on recent data but cannot predict words kept in long-term memory. As the gap length increases, RNN’s performance is inefficient. By default, the LSTM may hold data for a very long time. It is employed in the processing, prediction, and classification of time-series data.

Deep Learning Model

Humans do not constantly have to rethink their ideas. Each word in this essay is understood in light of the ones that came before it. You don’t start again from scratch and discard everything. Your ideas are persistent. This appears to be a significant limitation of conventional neural networks that they are unable to overcome. Consider categorising the type of event that is occurring at each point in a movie as an example. It’s unclear how a conventional neural network may use its analysis of earlier movie events to guide decisions regarding later ones.

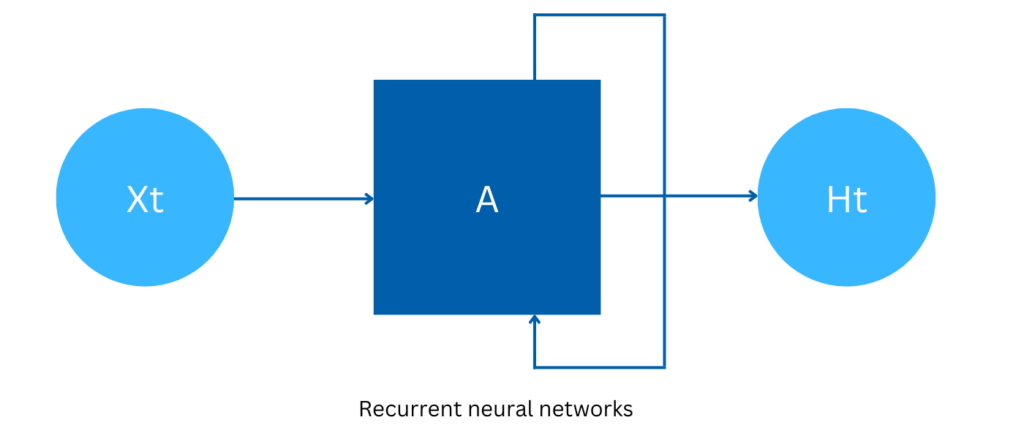

This problem is addressed by recurrent neural networks. They are networks that contain loops, which enable the persistence of information.

Recurrent neural networks

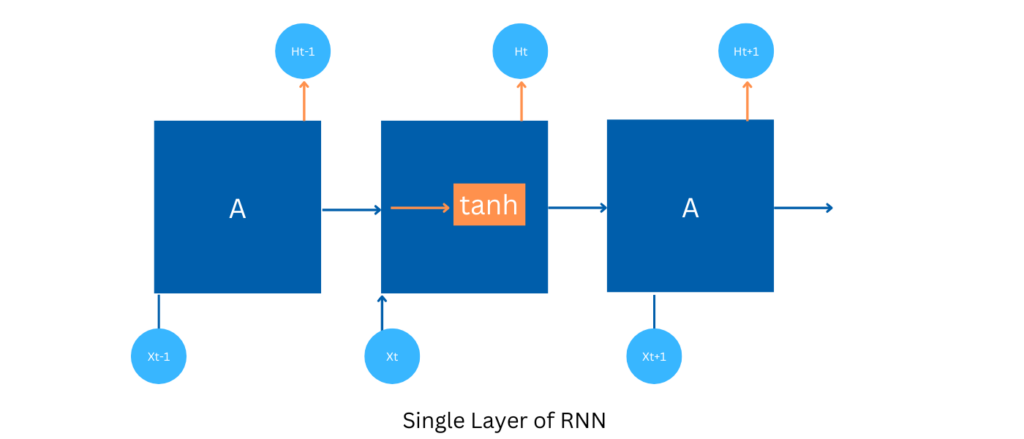

A loop enables data to be transferred from one network phase to the next. Recurrent neural networks are rather confusing because of these loops. It turns out that they aren’t all that different from a typical neural network, though, if you give it some more thought. One way to conceptualize recurrent neural networks is as numerous clones of the same network that are all capable of communicating with a successor.

Long Term dependencies Problem

One of the best aspects of RNNs is the potential to make connections between prior knowledge and the task at hand, for example, by using prior video frames to help interpret the present frame. RNNs would be very useful if they could accomplish this. Can they, though? It varies.

Sometimes, all we need to do to complete the task at hand is to look at the most recent data.

Theoretically, RNNs can handle such “long-term dependencies” with ease. However, RNNs don’t appear to be very effective in practise. Hochreiter (1991) [German] and Bengio, et al. (1994) looked into the problem in-depth and discovered some rather fundamental reasons why it would be challenging. LSTMs, thankfully, don’t have this issue!

Long Short Term Memory Model

Long-Short Term Memory Networks, most commonly referred to as “LSTMs,” are a unique class of RNN that can recognise long-term dependencies. They were first presented by Hochreiter & Schmidhuber (1997), and numerous authors developed and popularised them in subsequent works. They are currently frequently used and perform incredibly well when applied to a wide range of issues.

Intentionally, LSTMs are created to prevent the long-term reliance issue. Remembering information for extended periods of time is basically its default behaviour.

All recurrent neural networks have the shape of a series of neural network modules that repeat. This recurring module in typical RNNs will be made up of just one tanh layer.

In a typical RNN, the repeating module has just one layer.

Although the repeating module of LSTMs also has a chain-like topology, it is designed differently. There are four neural network layers instead of just one, and they interact in a very unique way.

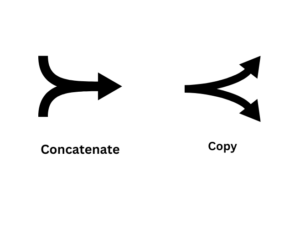

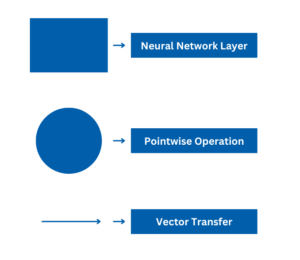

Shapes denote yellow rectangular box, pink circle, arrow line